What is AGI

In 2016, over 200 million people watched a Go match where world champion Lee Sedol lost 1:4 to a program called AlphaGo. A decade later, Lee returned to the arena, but this time he faced an AI capable of conversing with him. His journey, from shock to retirement and then to collaboration with AI, mirrors the evolution of artificial intelligence over the past ten years.

From AlphaGo to ChatGPT, AI appears to be getting smarter. However, a fundamental question arises: are we pursuing a specialized genius that excels in one area, or a versatile learner that can master anything like a human? The latter is known as Artificial General Intelligence (AGI).

Specialized Genius vs. True Learner

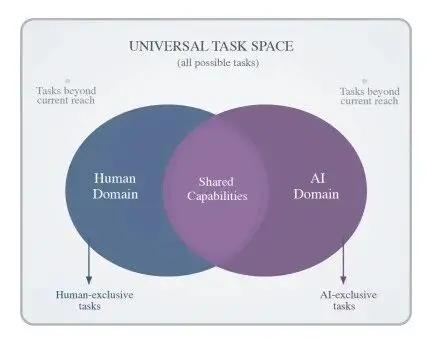

Most AI systems available today, including AlphaGo and the GPT series, are categorized as “narrow AI” or “specialized AI.” They can be understood as top experts in their fields but are also “cognitively impaired” outside their domains.

- AlphaGo can defeat world champions but cannot answer questions about the beauty of two girls standing in front of it. Its world is limited to a 19x19 grid, and it knows nothing beyond those rules.

- The GPT series can generate fluent text, but its understanding of language is based on massive data through “statistical pattern matching.” It might draw a hand with six fingers because it learned pixel patterns but never grasped the basic knowledge that a hand has five fingers.

Their common trait is exceptional performance within a closed domain trained on vast data; however, they “fail” or “speak nonsense” when faced with new scenarios or problems that lie outside their training data. They lack the human ability to generalize and quickly master new skills with minimal experience.

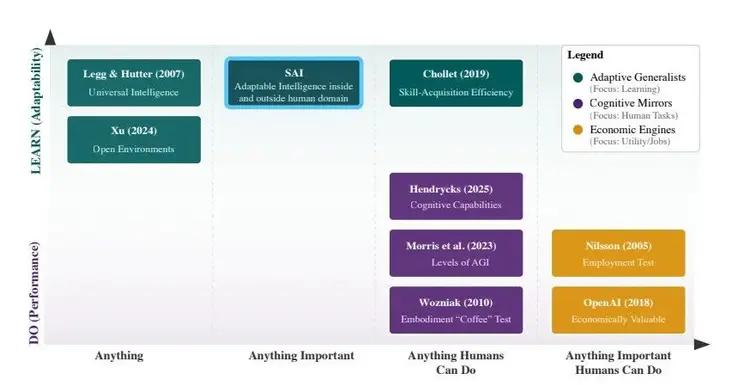

The true goal of AGI is to become the latter. It does not aim for perfect scores on single tests but seeks human-level learning efficiency. Former Google researcher François Chollet provided a profound definition: true AGI should be able to face any new problem, quickly understand and master it with minimal training data and computation, just like a human.

This is akin to hiring practices: who has more long-term value, a programmer who only knows Java (narrow AI) or a recent graduate who can quickly learn Python, Go, and even project management (AGI)? The answer is clear.

AGI Requires a Brain that Understands the Real World

To achieve this universal learning ability, AI lacks not more data but a “world model” that understands how the world operates.

Current large language models (LLMs) are essentially “language statistical masters” that learn about the world through text, with cognition limited to what language can express. However, the real world has physical laws, causal relationships, and common sense. Without these, AI is merely an advanced “parrot.”

World models are key to solving this dilemma. They enable AI to learn and predict object movements and physical laws through multi-sensory information such as vision, hearing, and touch. With a world model, AI can transition from “recognition” to “understanding,” from “passively executing code” to “actively planning actions.”

For example, a cleaning robot encountering a broken branch on the road might freeze if it only has preset visual recognition programs. However, with a world model, it can simulate the consequences of moving the branch based on its material and shape, then plan a detour or safe cleanup path to maintain continuous operation.

This represents a crucial leap needed for AGI: upgrading from statistical pattern fitting to understanding causal reasoning and autonomous decision-making.

The Transition: From “Answering Questions” to “Completing Tasks”

While complete AGI has not yet been realized, we are clearly on the threshold of transitioning from “specialized AI” to “general intelligent agents.” The hallmark of this shift is that the core task of AI is moving from “content generation” to “task execution.”

- Google DeepMind released AlphaEvolve in April 2026. It is no longer just executing algorithms but can autonomously design and optimize advanced algorithms. It solved the “kissing number problem” that has troubled mathematicians for 300 years and improved the computational efficiency of Google’s core AI model by 23%. This indicates that AI is evolving from “tool users” to “tool inventors.”

- Baidu upgraded its search engine to a “Dual-Agent Engine.” Previously, searches were about “finding information”; now they focus on “completing tasks.” When you search for “what to do in Shanghai this weekend,” the underlying agent automatically breaks down the task: checking the weather, booking train tickets, recommending attractions, and generating an itinerary, leading directly to results. The endpoint of a search is no longer a webpage link but a satisfied need.

- In the industrial sector, changes are even more solid. Kepler Robotics’s “Gen3.0” system allows industrial robots to “feel, understand, and perform” through force and tactile sensors, achieving generalized fine operations. Ping An Medical AI has built an early screening system covering over 90 diseases, completing 1.5 million screenings and improving the consistency of top expert treatment plans to over 92.5% through AI multidisciplinary consultation systems.

These are not science fiction. They point in the same direction: AI is beginning to exhibit coherent capabilities of perception, planning, decision-making, and execution in specific scenarios, which is the embryonic form of AGI.

The Road Ahead: Costs, Controversies, and Human Roles

Of course, the path to AGI is fraught with challenges. The most pressing bottleneck is computational costs. The power consumption required to train top AI models is comparable to that of a small city, with computational costs accounting for over 65% of R&D expenditures. While technological iterations are rapidly reducing unit costs, this remains a looming threat in the short term.

A more fundamental controversy lies in the approach itself. Turing Award winner Yann LeCun and others argue that pursuing a “universal” AGI may be a misguided endeavor. Human intelligence itself is also “specialized” (skilled in social interactions but not in precise calculations). He proposes that the future of AI should be “Superhuman Adaptive Intelligence (SAI)”—achieving excellence in specific domains while rapidly adapting to new situations, rather than pursuing unrealistic “universality.”

Regardless of the technical path debates, the societal impact of AGI is unavoidable. It will not lead to mass unemployment but will profoundly reshape employment. Research from Yale University indicates that computational resources will be prioritized for automation in energy, scientific research, and other “bottleneck” fields; meanwhile, manual labor and customer service jobs, due to high replacement costs, will still be performed by humans.

The core issue for the future is not whether there will be unemployment, but how to measure the unique value of humans in creativity, emotional intelligence, and ethical judgment, and based on that, construct a new distribution system.

From the Go AI that Lee Sedol faced to today’s intelligent agents capable of designing algorithms, diagnosing diseases, and scheduling factories, we have taken ten years. AGI is not an overnight emergence of a “super brain” but a process of continually expanding capability boundaries, gradually approaching and surpassing human learning efficiency.

We may never create an intelligence that is philosophically identical to humans. However, we are building countless systems that exceed humans in learning and execution efficiency for specific tasks and can transfer this capability from one domain to another. When these systems connect, the era we call AGI will have effectively arrived.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.